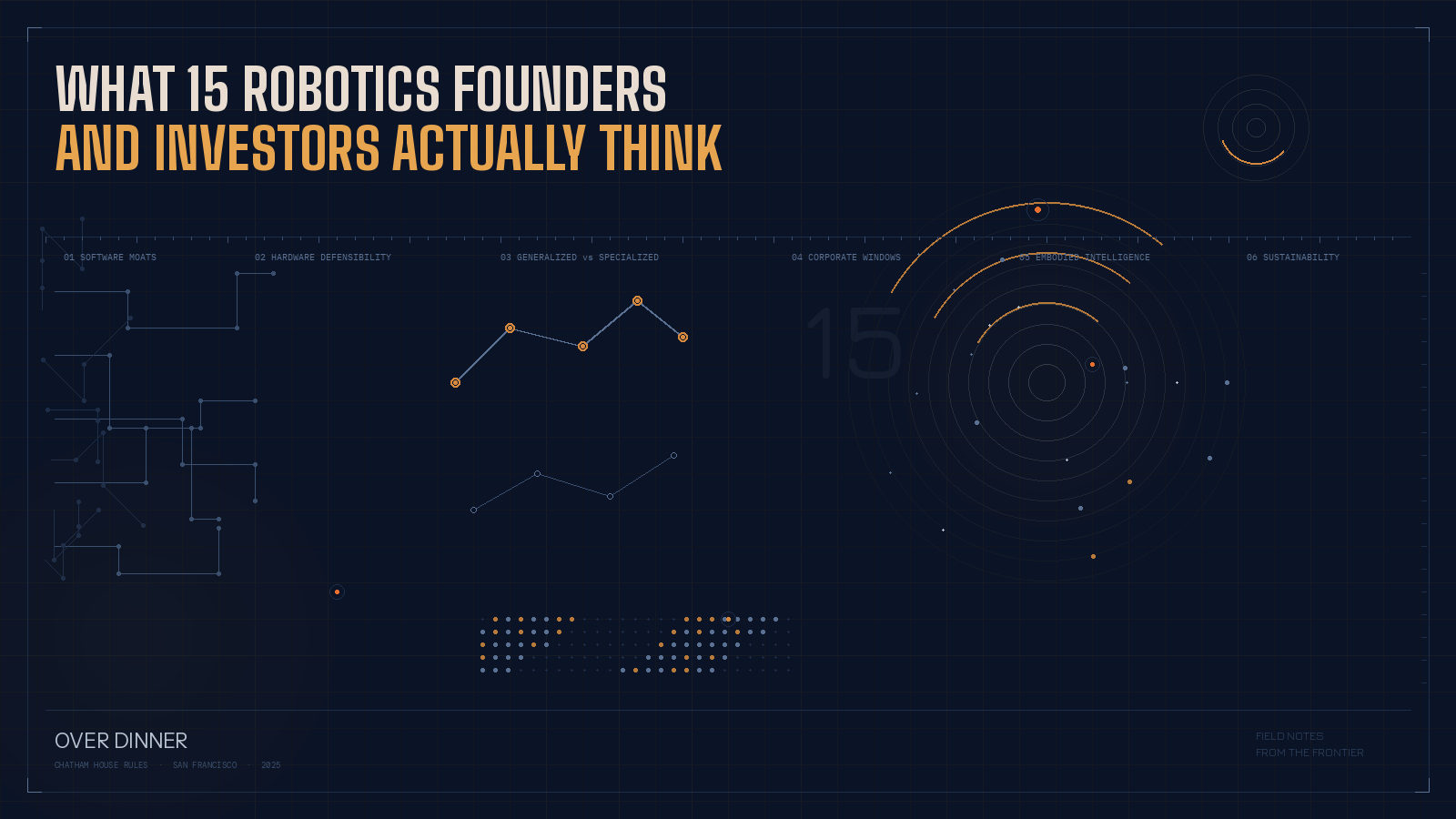

What 15 Robotics Founders and Investors Actually Think Over Dinner

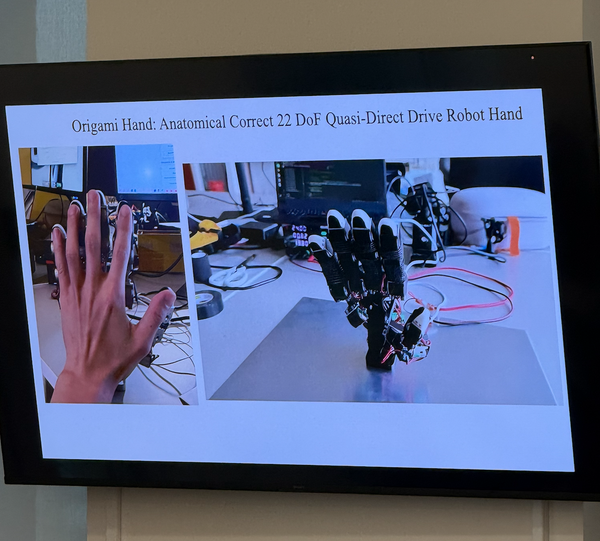

A few weeks ago, I hosted a small dinner in San Francisco — about fifteen people around a table. Founders building robotic hands, mobile manipulators, warehouse systems, autonomous vehicles, and world models for manipulation. Investors from early-stage funds to multi-stage industrial tech platforms. A few operators. Everyone had skin in the game.

We went around the table. Good things, bad things, things we need help with. What started as intros turned into a three-hour conversation that I think captures where physical AI actually stands right now — not the conference keynote version, but the real one. Here's what came up.

"I Built the Same Thing in Two Days"

The most visceral moment of the night came when a six-time founder shared a story about a friend — a software entrepreneur with two years of runway and respected investors on his cap table.

This founder had spent two years building a sophisticated cryptography algorithm. As an experiment, he sat down over a weekend and asked Claude Code to rebuild it. It worked. High quality. Two days.

His reaction wasn't excitement. It was existential dread. "I feel useless right now," he told the table. "Everything we built is going to be useless. If somebody sees that it works, they'll repeat it in two weeks."

So he called his investors — a well-known, well-respected firm. Their response was startling in its honesty: "We also don't know what to do. Most of our software portfolio just became heritage. We feel like there's no big value behind this anymore."

The table went quiet for a second. Then someone else jumped in: their company had recently helped a Chinese robotics firm build an entire SDK — full stack, pip-installable on any machine. A competitor had paid $1.2 million and months of engineering time for the same deliverable. It took one software engineer and an AI coding assistant two days.

"I told my engineer, you just did $1.2 million worth of work. In two days."

This wasn't theoretical. These were real contracts, real companies, real money — and the gap between what software cost to build six months ago and what it costs today is collapsing so fast that even the investors funding these companies can't keep up with the implications.

The natural question someone asked: "So what do we do?"

The answer, from multiple people at the table: build the robot.

The Moat Isn't Code. It's Showing Up.

If software is commoditizing at this pace, where does value actually live?

One investor framed it cleanly: very few technology businesses have ever been enduringly defensible on technology alone. Even before current AI coding tools, a team of twenty could rebuild most top-ten SaaS products in a few sprints. Those businesses were still worth billions — because value lived in owning the customer, the distribution, the data entrenchment. You can rebuild Salesforce tomorrow. Good luck getting anyone to buy your CRM.

In robotics, the argument gets even stronger. The investor drew a comparison to language models: OpenAI has its ChatGPT moment, and the very next day, the infrastructure exists for an infinitely scalable application layer on top of it. Cursor can serve a hundred million customers immediately.

That will never be true in robotics. The performance bar is higher. The deployment is physical. The customer trust is earned unit by unit, site by site.

So the founders who are spending real time right now — winning customer trust, building supply chains, doing the unglamorous integration work with warehouse management systems and legacy equipment — they're building the infrastructure to scale rapidly whenever the model layer crosses the performance threshold. They can't be replaced overnight by someone with a better algorithm, because the algorithm was never the hard part.

One founder building industrial mobile manipulators put it simply: they've got four units deployed, they're meeting production-level customer metrics, and now the question is how to go from ten to a hundred. The bottleneck isn't intelligence. It's data (old industries don't digitize well), customer commitment structures (everyone wants project-based leases, not long-term contracts), and the grinding operational work of making robots reliable in complex, unstructured environments.

That work is the moat. Not the model.

The Humanoid Heresy

One founder admitted he'd told a humanoid summit that he doesn't believe in humanoids. At a humanoid summit. To humanoid people.

His argument is more nuanced than pure contrarianism. He frames it around what he calls "under-humanoids" and "over-humanoids." Under-humanoids are simpler than human form — maybe a single arm on a mobile base doing manufacturing tasks. Over-humanoids are more capable than human form — four legs, four arms, 360-degree vision, no spinal limitations. Things that can sit under a sofa and unfold, or run at speeds and lift at forces no human body allows.

The paradox he keeps coming back to: today's most impressive humanoids can do backflips, but they still can't unload a dishwasher. And the dishwasher is the harder problem.

Another founder — who spent a decade in automotive — extended the analogy. If you asked an AI to design the single best car for the most use cases, you'd get a Toyota Camry. But you'd never deliver Amazon packages with a Camry. That's why Amazon has a Rivian van. Form factor follows function, and manufacturing has hundreds of distinct functions.

The automotive parallel cuts deeper than people realize. One attendee pointed out that in a modern auto factory producing a pickup truck every sixty seconds, there might be thirty-six people on the floor. One of them exists solely to move a pallet from one spot to another — and the only reason that job hasn't been automated is union agreements, not technical limitations.

The real action in factory robotics isn't replacing humans wholesale with humanoid form factors. It's automating the specific dull, repetitive, physically demanding tasks that humans shouldn't be doing — drawing lines, drilling holes, spraying coatings, moving bins — with purpose-built systems that are reliable enough to earn their way into production.

One founder described his approach: instead of building robots for the open, unpredictable world, build enclosed environments — "cages" — where you release twenty, thirty, a hundred robots. Inside the cage, you control the physics. You minimize unpredictability. And you achieve performance no human can match: moving beams at five meters per second, retrieving items from nine meters up. It's less cinematic than a humanoid walking through a kitchen. It's also deployable today.

Generalized Brain vs. Specialized Muscle

The deepest technical debate of the night was whether robotics will follow the language model playbook — a few massive foundation models that everyone builds on — or whether it'll go a fundamentally different direction.

The generalist case: One investor argued from first principles. The world is designed for humans. Every AI system is ultimately trying to recreate the processing power of the human brain. And a four-year-old child can enter a novel environment and interact with it using pretty good dexterity and intuitive physics — with relatively little task-specific training. It's hard to believe that embodied AI won't eventually reach the same place.

The specialist case: A founder building world models for robotic manipulation pushed back. For language, massive pretraining on internet-scale text data was the unlock. For robotics, you don't have that ocean of data — and you may not need it. You can go straight to post-training: reinforcement learning to master individual tasks at high reliability. Physical Intelligence has shown this works. If you copy that recipe and deploy it across verticals, you get L2/L3 automation that's commercially valuable now, without waiting for the L5 generalized brain.

The data realist case: Someone who'd worked at Waymo offered a grounding perspective. The popular narrative is that Waymo's breakthrough came from switching to ML-based approaches and world models. The reality? The breakthrough came from upgrading their lidar sensors. Only once they had better sensor data could they train the models that actually worked. The lesson: the architecture matters less than the data pipeline. Transformers might be good enough. The question is who controls the data flywheel.

A founder cited a recent Toyota Research paper that found model architecture doesn't significantly matter — transformers, diffusion policies, state-space models all converge to similar performance when trained on the same data. "People always have this misbelief that maybe there's a more generalizable architecture that can magically solve everything. It turns out the algorithm doesn't really matter."

Another founder tested this directly: they trained an action chunking transformer against a diffusion policy on the same robotic manipulation task. The transformer performed better and was easier to train and scale.

Where does this leave us? Probably with two curves. One rises steeply and linearly: task-specific models trained on domain data, commercially useful today, improving incrementally. The other is flat for a long time, then exponential: a truly generalized embodied intelligence model. Nobody at the table could confidently say when — or if — those curves intersect. But almost everyone agreed that the founders building the data pipelines and customer relationships today are best positioned regardless of which curve wins.

The Window Is Open (For Now)

Perhaps the most actionable insight of the night: industrial corporations are behaving differently than they were two years ago.

Multiple founders reported the same pattern. Corporate executives attend Davos, or a cybersecurity conference, or an all-hands where the CEO declares that AI will transform their operations. They come back and create dedicated "AI POC budgets" — with real money attached and real pressure to deploy it. One investor said they'd been on the receiving end of panic calls from executives who'd just been told they have minimum AI spend targets and don't know where to start.

The result is that industrial customers are now willing to be patient development partners in a way that would have been unthinkable pre-COVID. One founder described pitching a Fortune 200 healthcare company from his garage. The customer's response: "We know you're sitting in a garage. We know the chances of failure are high. But if we don't do this, we'll never learn." They proposed paying $80,000 for the pilot. The customer came back and offered $125,000.

A major humanoid company just signed contracts with automotive tier suppliers for pilots that won't begin until mid-2027. Two years out. That kind of patience from industrial buyers is historically unprecedented in robotics.

How long does this window stay open? Nobody knows. But several people at the table pointed out that industrial decision-making moves slowly in both directions. It takes a long time to adopt a new mindset, and it takes a long time to abandon one. If you're a robotics founder, the time to build those corporate relationships is right now — not when your product is perfect, but when the buyer's appetite for risk is at a generational high.

The Part Nobody Talks About

The last thread worth capturing isn't about technology or markets. It's about the humans building all of this.

Multiple founders at the table admitted to sleeping four or five hours a night. One first-time founder, two months out of a big tech job, described already feeling burned out — overwhelmed by the simultaneous demands of fundraising, product development, hiring, and building a network in a new city, with a remote co-founder and no playbook.

An experienced investor observed something broader: across his network, founders and operators are becoming less responsive, less decisive, and more paralyzed. Hardware people — who historically stayed grounded through the physicality of their work — are now caught in the same dopamine-and-anxiety cycle as software founders, because the software layer of their stack changes every week. People are getting addicted to the adrenaline of constant building and can't step off.

One six-time founder put it bluntly: "I see more and more people just dropping off. They're not checking out because they're lazy. They're checking out because the pace is unsustainable and they can't make decisions when everything moves this fast."

Someone recommended a book called Essentialism — the core idea being to build the muscle of ruthless prioritization instead of trying to stay on top of ten things simultaneously. Easy to say. Hard to do when your runway is ticking and your competitor just shipped something built in a weekend with an AI coding assistant.

The honest truth at the table was that nobody has this figured out. The pace of change is genuinely new, the pressure is real, and the founders who survive the next few years will be the ones who find some sustainable way to operate inside the chaos — not the ones who just grind the hardest.

This article was created by Bogdan with help from Superwhisper and Claude Code